Javascript has become one of the most popular and widely used languages due to the massive improvements it has seen and the introduction of the runtime known as NodeJS. Whether it's a web or mobile application, Javascript now has the right tools. This article will explain how the vibrant ecosystem of NodeJS allows you to efficiently scrape the web to meet most of your requirements.

Using web scraping tools are a great alternative to extract data from web pages. In this post, we will share with you the most popular web scraping tools to extract data. With these automated scrapers you can scrape data without any programming skills and you can scrape data at a low cost. Node.js has a large library of packages that simplify different tasks. For web scraping we will use two packages called request and cheerio. The request package is used to download web pages, while cheerio generates a DOM tree and provides a subset of the jQuery function set to manipulate it. Default options you can find in lib/config/defaults.js or get them using scrape.defaults. Urls Array of objects which contain urls to download and filenames for them.

Prerequisites

This post is primarily aimed at developers who have some level of experience with Javascript. However, if you have a firm understanding of Web Scraping but have no experience with Javascript, this post could still prove useful.Below are the recommended prerequisites for this article:

- ✅ Experience with Javascript

- ✅ Experience using DevTools to extract selectors of elements

- ✅ Some experience with ES6 Javascript (Optional)

⭐ Make sure to check out the resources at the end of this article to learn more!

Outcomes

After reading this post will be able to:

- Have a functional understanding of NodeJS

- Use multiple HTTP clients to assist in the web scraping process

- Use multiple modern and battle-tested libraries to scrape the web

Understanding NodeJS: A brief introduction

Javascript is a simple and modern language that was initially created to add dynamic behavior to websites inside the browser. When a website is loaded, Javascript is run by the browser's Javascript Engine and converted into a bunch of code that the computer can understand.

For Javascript to interact with your browser, the browser provides a Runtime Environment (document, window, etc.).

This means that Javascript is not the kind of programming language that can interact with or manipulate the computer or it's resources directly. Servers, on the other hand, are capable of directly interacting with the computer and its resources, which allows them to read files or store records in a database.

When introducing NodeJS, the crux of the idea was to make Javascript capable of running not only client-side but also server-side. To make this possible, Ryan Dahl, a skilled developer took Google Chrome's v8 Javascript Engine and embedded it with a C++ program named Node.

So, NodeJS is a runtime environment that allows an application written in Javascript to be run on a server as well.

As opposed to how most languages, including C and C++, deal with concurrency, which is by employing multiple threads, NodeJS makes use of a single main thread and utilizes it to perform tasks in a non-nlocking manner with the help of the Event Loop.

Putting up a simple web server is fairly simple as shown below:

If you have NodeJS installed and you run the above code by typing(without the < and >) in node <YourFileNameHere>.js opening up your browser, and navigating to localhost:3000, you will see some text saying, “Hello World”. NodeJS is ideal for applications that are I/O intensive.

HTTP clients: querying the web

HTTP clients are tools capable of sending a request to a server and then receiving a response from it. Almost every tool that will be discussed in this article uses an HTTP client under the hood to query the server of the website that you will attempt to scrape.

Request

Request is one of the most widely used HTTP clients in the Javascript ecosystem. However, currently, the author of the Request library has officially declared that it is deprecated. This does not mean it is unusable. Quite a lot of libraries still use it, and it is every bit worth using.

It is fairly simple to make an HTTP request with Request:

You can find the Request library at GitHub, and installing it is as simple as running npm install request.

You can also find the deprecation notice and what this means here. If you don't feel safe about the fact that this library is deprecated, there are other options down below!

Axios

Axios is a promise-based HTTP client that runs both in the browser and NodeJS. If you use TypeScript, then Axios has you covered with built-in types.

Making an HTTP request with Axios is straight-forward. It ships with promise support by default as opposed to utilizing callbacks in Request:

If you fancy the async/await syntax sugar for the promise API, you can do that too. But since top level await is still at stage 3, we will have to make use of an async function instead:

All you have to do is call getForum! You can find the Axios library at Github and installing Axios is as simple as npm install axios.

SuperAgent

Much like Axios, SuperAgent is another robust HTTP client that has support for promises and the async/await syntax sugar. It has a fairly straightforward API like Axios, but SuperAgent has more dependencies and is less popular.

Regardless, making an HTTP request with Superagent using promises, async/await, or callbacks looks like this:

You can find the SuperAgent library at GitHub and installing Superagent is as simple as npm install superagent.

For the upcoming few web scraping tools, Axios will be used as the HTTP client.

Note that there are other great HTTP clients for web scrapinglike node-fetch!

Regular expressions: the hard way

The simplest way to get started with web scraping without any dependencies is to use a bunch of regular expressions on the HTML string that you fetch using an HTTP client. But there is a big tradeoff. Regular expressions aren't as flexible and both professionals and amateurs struggle with writing them correctly.

For complex web scraping, the regular expression can also get out of hand. With that said, let's give it a go. Say there's a label with some username in it, and we want the username. This is similar to what you'd have to do if you relied on regular expressions:

In Javascript, match() usually returns an array with everything that matches the regular expression. In the second element(in index 1), you will find the textContent or the innerHTML of the <label>tag which is what we want. But this result contains some unwanted text (“Username: “), which has to be removed.

As you can see, for a very simple use case the steps and the work to be done are unnecessarily high. This is why you should rely on something like an HTML parser, which we will talk about next.

Cheerio: Core jQuery for traversing the DOM

Cheerio is an efficient and light library that allows you to use the rich and powerful API of jQuery on the server-side. If you have used jQuery previously, you will feel right at home with Cheerio. It removes all of the DOM inconsistencies and browser-related features and exposes an efficient API to parse and manipulate the DOM.

As you can see, using Cheerio is similar to how you'd use jQuery.

However, it does not work the same way that a web browser works, which means it does not:

- Render any of the parsed or manipulated DOM elements

- Apply CSS or load any external resource

- Execute Javascript

So, if the website or web application that you are trying to crawl is Javascript-heavy (for example a Single Page Application), Cheerio is not your best bet. You might have to rely on other options mentionned later in this article.

To demonstrate the power of Cheerio, we will attempt to crawl the r/programming forum in Reddit and, get a list of post names.

First, install Cheerio and axios by running the following command:npm install cheerio axios.

Then create a new file called crawler.js, and copy/paste the following code:

getPostTitles() is an asynchronous function that will crawl the Reddit's old r/programming forum. First, the HTML of the website is obtained using a simple HTTP GET request with the axios HTTP client library. Then the HTML data is fed into Cheerio using the cheerio.load() function.

With the help of the browser Dev-Tools, you can obtain the selector that is capable of targeting all of the postcards. If you've used jQuery, the $('div > p.title > a') is probably familiar. This will get all the posts. Since you only want the title of each post individually, you have to loop through each post. This is done with the help of the each() function.

To extract the text out of each title, you must fetch the DOM element with the help of Cheerio (el refers to the current element). Then, calling text() on each element will give you the text.

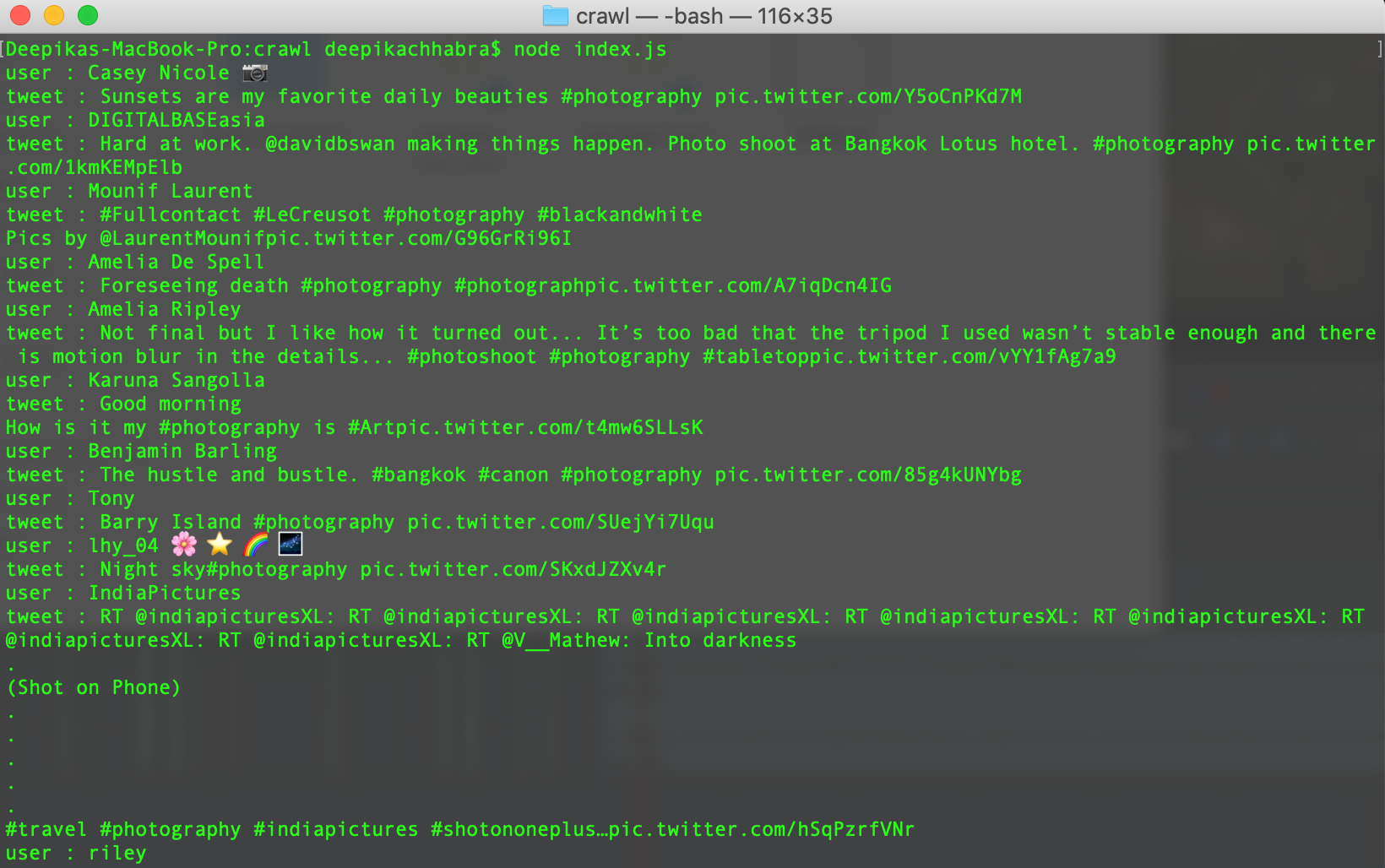

Now, you can pop open a terminal and run node crawler.js. You'll then see an array of about 25 or 26 different post titles (it'll be quite long). While this is a simple use case, it demonstrates the simple nature of the API provided by Cheerio.

If your use case requires the execution of Javascript and loading of external sources, the following few options will be helpful.

JSDOM: the DOM for Node

JSDOM is a pure Javascript implementation of the Document Object Model to be used in NodeJS. As mentioned previously, the DOM is not available to Node, so JSDOM is the closest you can get. It more or less emulates the browser.

Once a DOM is created, it is possible to interact with the web application or website you want to crawl programmatically, so something like clicking on a button is possible. If you are familiar with manipulating the DOM, using JSDOM will be straightforward.

Serious sam 3: jewel of the nile crack. As you can see, JSDOM creates a DOM. Then you can manipulate this DOM with the same methods and properties you would use while manipulating the browser DOM.

To demonstrate how you could use JSDOM to interact with a website, we will get the first post of the Reddit r/programming forum and upvote it. Then, we will verify if the post has been upvoted.

Start by running the following command to install JSDOM and Axios:npm install jsdom axios

Then, make a file named crawler.js and copy/paste the following code:

upvoteFirstPost() is an asynchronous function that will obtain the first post in r/programming and upvote it. To do this, axios sends an HTTP GET request to fetch the HTML of the URL specified. Then a new DOM is created by feeding the HTML that was fetched earlier.

The JSDOM constructor accepts the HTML as the first argument and the options as the second. The two options that have been added perform the following functions:

- runScripts: When set to “dangerously”, it allows the execution of event handlers and any Javascript code. If you do not have a clear idea of the credibility of the scripts that your application will run, it is best to set runScripts to “outside-only”, which attaches all of the Javascript specification provided globals to the

windowobject, thus preventing any script from being executed on the inside. - resources: When set to “usable”, it allows the loading of any external script declared using the

<script>tag (e.g, the jQuery library fetched from a CDN).

Once the DOM has been created, you can use the same DOM methods to get the first post's upvote button and then click on it. To verify if it has been clicked, you could check the classList for a class called upmod. If this class exists in classList, a message is returned.

Now, you can pop open a terminal and run node crawler.js. You'll then see a neat string that will tell you if the post has been upvoted. While this example use case is trivial, you could build on top of it to create something powerful (for example, a bot that goes around upvoting a particular user's posts).

If you dislike the lack of expressiveness in JSDOM and your crawling relies heavily on such manipulations or if there is a need to recreate many different DOMs, the following options will be a better match.

Puppeteer: the headless browser

Puppeteer, as the name implies, allows you to manipulate the browser programmatically, just like how a puppet would be manipulated by its puppeteer. It achieves this by providing a developer with a high-level API to control a headless version of Chrome by default and can be configured to run non-headless.

Taken from the Puppeteer Docs (Source)

Puppeteer is particularly more useful than the aforementioned tools because it allows you to crawl the web as if a real person were interacting with a browser. This opens up a few possibilities that weren't there before:

- You can get screenshots or generate PDFs of pages.

- You can crawl a Single Page Application and generate pre-rendered content.

- You can automate many different user interactions, like keyboard inputs, form submissions, navigation, etc.

It could also play a big role in many other tasks outside the scope of web crawling like UI testing, assist performance optimization, etc.

Quite often, you will probably want to take screenshots of websites or, get to know about a competitor's product catalog. Puppeteer can be used to do this. To start, install Puppeteer by running the following command:npm install puppeteer

This will download a bundled version of Chromium which takes up about 180 to 300 MB, depending on your operating system. If you wish to disable this and point Puppeteer to an already downloaded version of Chromium, you must set a few environment variables.

This, however, is not recommended. Ff you truly wish to avoid downloading Chromium and Puppeteer for this tutorial, you can rely on the Puppeteer playground.

Let's attempt to get a screenshot and PDF of the r/programming forum in Reddit, create a new file called crawler.js, and copy/paste the following code:

getVisual() is an asynchronous function that will take a screenshot and PDF of the value assigned to the URL variable. To start, an instance of the browser is created by running puppeteer.launch(). Then, a new page is created. This page can be thought of like a tab in a regular browser. Then, by calling page.goto() with the URL as the parameter, the page that was created earlier is directed to the URL specified. Finally, the browser instance is destroyed along with the page.

Once that is done and the page has finished loading, a screenshot and PDF will be taken using page.screenshot() and page.pdf() respectively. You could also listen to the Javascript load event and then perform these actions, which is highly recommended at the production level.

When you run the code type in node crawler.js to the terminal, after a few seconds, you will notice that two files by the names screenshot.jpg and page.pdf have been created.

Also, we've written a complete guide on how to download a file with Puppeteer. You should check it out!

Nightmare: an alternative to Puppeteer

Nightmare is another a high-level browser automation library like Puppeteer. It uses Electron but is said to be roughly twice as fast as it's predecessor PhantomJS and it's more modern.

If you dislike Puppeteer or feel discouraged by the size of the Chromium bundle, Nightmare is an ideal choice. To start, install the Nightmare library by running the following command:npm install nightmare

Once Nightmare has been downloaded, we will use it to find ScrapingBee's website through a Google search. To do so, create a file called crawler.js and copy/paste the following code into it:

First, a Nightmare instance is created. Then, this instance is directed to the Google search engine by calling goto() once it has loaded. The search box is fetched using its selector. Then the value of the search box (an input tag) is changed to “ScrapingBee”.

After this is finished, the search form is submitted by clicking on the “Google Search” button. Then, Nightmare is told to wait untill the first link has loaded. Once it has loaded, a DOM method will be used to fetch the value of the href attribute of the anchor tag that contains the link.

Finally, once everything is complete, the link is printed to the console. To run the code, type in node crawler.js to your terminal.

Summary

That was a long read! But now you understand the different ways to use NodeJS and it's rich ecosystem of libraries to crawl the web in any way you want. To wrap up, you learned:

- ✅ NodeJS is a Javascript runtime that allow Javascript to be run server-side. It has a non-blocking nature thanks to the Event Loop.

- ✅ HTTP clients such as Axios, SuperAgent, Node fetch and Request are used to send HTTP requests to a server and receive a response.

- ✅ Cheerio abstracts the best out of jQuery for the sole purpose of running it server-side for web crawling but does not execute Javascript code.

- ✅ JSDOM creates a DOM per the standard Javascript specification out of an HTML string and allows you to perform DOM manipulations on it.

- ✅ Puppeteer and Nightmare are high-level browser automation libraries, that allow you to programmatically manipulate web applications as if a real person were interacting with them.

While this article tackles the main aspects of web scraping with NodeJS, it does not talk about web scraping without getting blocked.

If you want to learn how to avoid getting blocked, read our complete guide, and if you don't want to deal with this, you can always use our web scraping API.

Happy Scraping!

Resources

Would you like to read more? Check these links out:

- NodeJS Website - Contains documentation and a lot of information on how to get started.

- Puppeteer's Docs - Contains the API reference and guides for getting started.

- Playright An alternative to Puppeteer, backed by Microsoft.

- ScrapingBee's Blog - Contains a lot of information about Web Scraping goodies on multiple platforms.

So you’ve probably heard of Web Scraping and what you can do with it, and you’re probably here because you want some more info on it.

Web Scraping is basically the process of extracting data from a website, that’s it.

Today we’re going to look at how you can start scraping with Puppeteer for NodeJs

Featured on

This article was featured already on multiple pages such as:

Javascript Daily’s Twitter

NodeJs Weekly – Issue #279

Thank you to everyone! 🔥

Table of contents

What is Puppeteer?

Puppeteer is a library created for NodeJs which basically gives you the ability to control everything on the Chrome or Chromium browser, with NodeJs.

You can do things like a normal browser would do and a normal human would, for example:

- Open up different pages ( multiple at the same time )

- Move the mouse and make use of it just like a human would

- Press the keyboard and type stuff into input boxes

- Take screenshots programmatically for different situations

- Generate PDF’s from website pages

- Automate specific actions for websites

and many many more things

Puppeteer is created by the folks from Google and also maintained by them and even though’ the project is still pretty new to the market, it has skyrocketed over all the other competitors ( NightmareJs, Casper.etc ) with over 40 000 stars on github.

Setup of the project

The first thing that you need to make sure is to have NodeJs installed in your PC or Mac.

After that you can initiate your first project on a new and empty folder with npm.

You can simply do this with the Terminal by going to the newly created folder and then running the following command:

Now you can input all the project details and you also can just hit Enter

After the setup you should now have a package.json. file with content that looks similar to this:

Installing dependencies

Now we can start the installation of the needed Packages

Here’s what we’re going to need

- puppeteer

So we are going to use npm install

While this is installing I’m going to take the time and explain to you What is Puppeteer

Puppeteer is an API that lets you manage the Chromium Browser with code written in NodeJs.

And the cool part about this is that Web Scraping with Puppeteer is very easy and beginner friendly.

Even beginners of Javascript can start to web scrape the web with Puppeteer because of it’s simplicity and because it is straight forward.

Preparing the example

Now that we’re done with the boring stuff, let’s actually create an example just so that we can confirm that it’s working and see it in action.

Here is what we are going to build so that you get used to Puppeteer and understand how it works.

Lets create a simple web scraper for IMDB with Puppeteer

And here is what we need to do

- Initiate the Puppeteer browser and create a new page

- Go to the specified movie page, selected by a Movie Id

- Wait for the content to load

- Use evaluate to tap into the html of the current page opened with Puppeteer

- Extract the specific strings / text that you want to extract using query selectors

Seems pretty easy, right?

Building the IMDB Scraper

I’m just going to give you a quick snippet of code and then we’re going to talk about it just a bit.

I am using the Google DevTools to check the html content and the classes so that I can generate a query selector for the Title, Rating and RatingCount

Learning the Selectors and how they work is very useful for this if you want to build custom selectors for different parts of the website that you want to scrape.

Here’s what I’ve built.

You can test out exactly this code and after running it you should see something like this

And of course, you can edit the code and improve it to go and scrape more details.

This was just for demonstration purposes so that you can see how powerful Web Scraping with Puppeteer is.

This code was written by me and tested in 15 minutesmaximum and I’m just trying to emphasize how easy and fast you can do certain things with Puppeteer.

How to run it

There are multiple ways of running the code and I am going to show you 2 ways of doing that.

Via the terminal

You can use the terminal to run it like you’ve probably heard of and you can do that with a simple command just like this:

And of course, you need to make sure you are in the right project directory with your terminal before actually running the code.

And instead of index.js, you can specify whatever file you want to run / execute.

Via an editor ( VSCode )

And also you can run it directly with an editor that has the option to do so. In my case, I am using both VSCode and phpStorm

You can run it very easily with VSCode by clicking the Debugger tab and then just running it, simple and nice.

And of course, you can change the actual movie that you want to scrape by easily editing this part of the code:

Where you can input your actual movie id that you get from any IMDB Movie Urls that look like:

Where the actual movie id is this tt6763664.

How to visually debug with Puppeteer

Before I’m going to end this short tutorial, I want to give you the best snippets of code that you can use when building scrapers with Puppeteer.

Go ahead and replace the line where you initialize the browser, with this:

What is this going to do?

This is basically going to tell the Chromium browser to NOT use the headless mode, meaning it will show up on your screen and do all the commands you tell it to so that you can see it visually.

Why is this powerful?

Because of the simple fact that you can see and pause with a debugger on any point of the execution and check out what is exactly happening with your code.

This is very powerful when building it for the first time and when checking for errors.

You should not use this mode in a production build, use it for development only.

Scraping dynamically rendered pages

This is the reason Puppeteer is so cool, it is a browser that renders each page just like you would when you access it via your browser.

Why is this helpful?

With Puppeteer you can wait for certain elements on the page to be loaded up / rendered until you start scraping.

This is a massive advantage when you are dealing with

- Websites that load just a bit of content and the rest is loaded via ajax calls

- The content is loaded separately via multiple ajax calls

- bonus: Even when you are dealing with iframes and multiple frames inside of a page

Puppeteer can handles everything that I had to deal with, regarding dynamic websites.

How?

Lets say you have a page that you are loading, and that page requests content via an ajax call.

You want to make sure that all that content is loaded fully before it starts to parse, because if the content that you are trying to parse is not there when the parsing happens, everything goes to waste.

You can easily handle this with the following statements

More debugging tips

I feel like when you are starting out, debugging tips are the best because you try to do certain things and you don’t know for sure if they work and you just want to have the tools to debug your work and make it happen.

Slowing down everything

When you are doing scrapers with Puppeteer, you have the option to give a delay to the browser so that it slows down every action that you program it to do.

And this is basically going to slow it down by 250ms

Making use of an integrated debugger;

This is also included to any kind of work you are doing with NodeJs so this tip will either blow your mind or you’ve known it already.

Usage of a debugger;

I personally use Visual Studio Code and PhpStorm with NodeJs plugin

If you don’t have a PhpStorm or WebStorm license, no worries, you can use VSCode

How to do you make use of the debugger?

You simply need to either put a Breakpoint or write debugger; j

And when you run it, it will then stop at exactly the line where you put the breakpoint or the debugger.

And how is this powerful?

If you still don’t know what I’m talking about, now after you are stuck in the debugger, you can access any variable available in that specific time, run code and inspect whatever it is you need.

If you still don’t use the debugger, you are missing out.

Bonus snippets

Before ending the actual code related content for this web scraping tutorial, I will give you a cool snippet to play around and also to make use of when needed.

Taking screenshots

Taking a screenshot of the current page opened with Puppeteer can be very useful for testing, debugging and not only.

Why is this useful?

It’s because, besides web scraping, you can use is for rendering dynamic pages and generate screenshots / previews for any page that you want to access.

You can easily do that with the following command

And you can place this wherever in the code where you want to take a screenshot and save it.

You can also check out the other parameters for the screenshot function from the actual Puppeteer .screenshot() function because there are a lot of other interesting parameters that you can give and make use of.

Connecting to a proxy

Connecting to a proxy can help in many cases where you either want to avoid getting banned on your servers or you want to test a website that is not accessible to your server’s country location or many other reasons.

It can be easily done with just one line of extra arguments passing when initiating the puppeteer browser.

If you have a username and password for your proxy server, then it would look something like this:

Where of course you would have to replace the USERNAME, PASSWORD and the IP & PORT.

Navigating to new pages

Navigating to new pages with puppeteer and nodejs can be done very easily.

At the same time it can be a bit tricky sometimes.

Here’s what I mean by that:

When you either give a await page.goto() command or use a click function to click on a link with await page.click(), a new page is going to load.

The tricky part is to make sure the new page has been loaded properly and it actually is the page you are looking for.

At first, you can do something like this:

Which will basically click on a selector that is a link and starts the navigation to the next page. Octofurry for mac.

With the waitForNavigation function you are basically waiting for the next page to load and to waitUntil there are no extra requests in the background for at least 500 ms.

Nodejs Website Scraper

This can work pretty well for most websites but there are cases, depending on the website that you are scraping, where this doesn’t work how you wanted it to because of constant requests in the background or because the website can be dynamically rendered.

Nodejs Web Scraper

In that case, the best option that I see ( and correct me or add to it in the comments ) is to wait for a specific selector that you know is going to exist in the page you want to access next.

Here is how you can do that

Where you would need to specify a selector that is only available on the next page you are expecting to be loaded.

What you shouldn’t do

And of course, it comes to this part where I need to tell you that Scraping is a gray area and not everyone accepts it.

Since you’re basically using someone else’s bandwidth and resources ( when you go to a page and scrape it ), you should be respectful and do it in a mannered way.

Don’t overdo it, know when to stop and what is exceeding the limit.

But how can I know that?

Think of what it actually means to go and scrape 10.000 users or images from someone else’s site and how will that impact the person running the site.

Think of what you would not like to have someone do to your website and don’t do that to others too.

If it seems shady, it probably is and you should probably not do it.

PS: Make sure to read the Terms of Service / Terms of Usage of the specific websites. Some have clear specific terms that don’t allow you to scrape and automate anything. ( Instagram for example )

Resources to Learn

Here is a list of resources that will definitely help you with nodejs scraping with puppeteer and not only.

These will set the base of your scraping knowledge and improve your existing one.

Want to learn more?

Hopefully you will give this a try and test for yourself the code, Puppeteer is very powerful and you can do a lot with it and fast also.

Also if you want to learn more and go much more in-depth with the downloading of files, I have a great course with more hours of good content on web scraping with nodejs.